Cost Optimization and Performance: Architecting for Sustainability

Cost Optimization and Performance in Data Pipelines

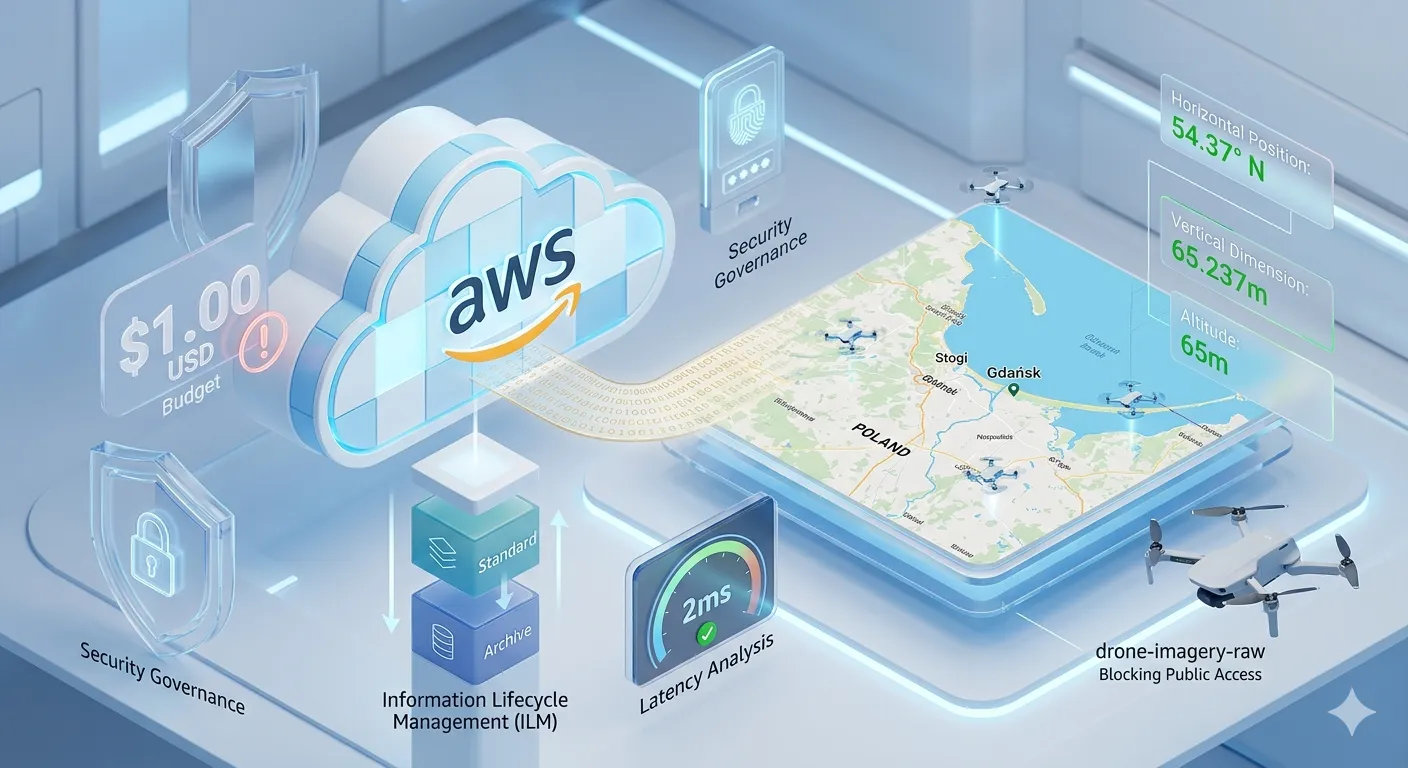

In this fourth installment of my drone data automation series, we’re shifting focus from visualization to a critical pillar of professional architecture: Operational Efficiency.

Scaling a cloud project often fails not because of code, but because of resource mismanagement. Here is how I configured my infrastructure to be financially sustainable and technically superior.

1. Governance and Budgetary Control

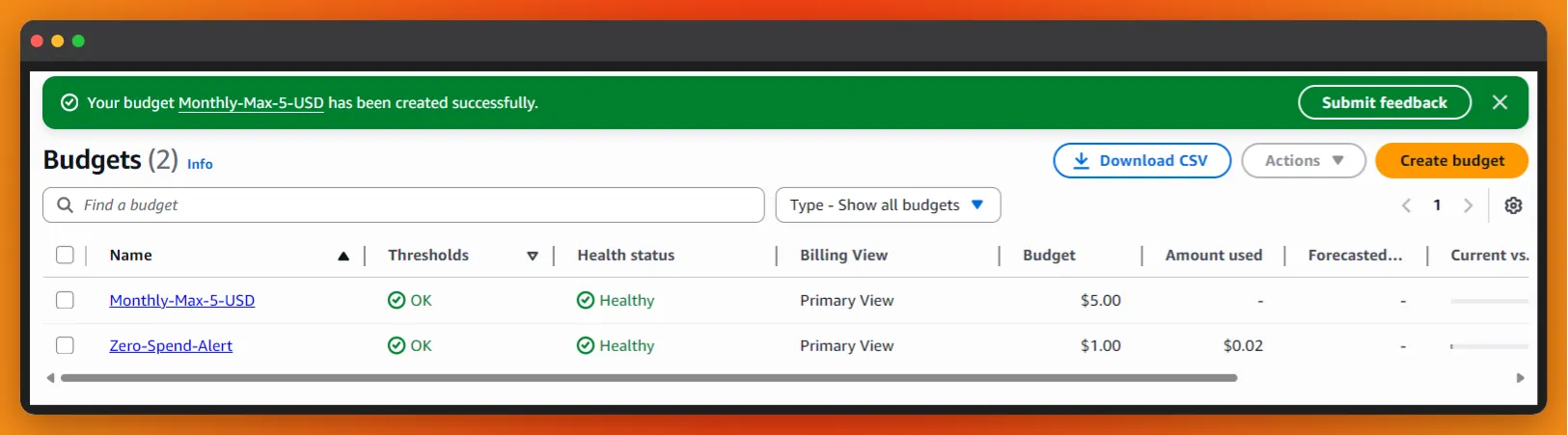

Cloud governance starts with visibility. I’ve implemented AWS Budgets to set a monthly spending cap. This setup acts as a financial “firewall,” triggering automated alerts if actual consumption deviates from the forecast.

Technical Setup: I’ve defined alert thresholds at 80% and 100% of the budget to ensure a proactive response to any spike in activity. This ensures that the experimentation phase never turns into an unexpected financial burden.

Figure 1: Setting up proactive financial guardrails using AWS Budgets to maintain full visibility over cloud consumption.

Figure 1: Setting up proactive financial guardrails using AWS Budgets to maintain full visibility over cloud consumption.

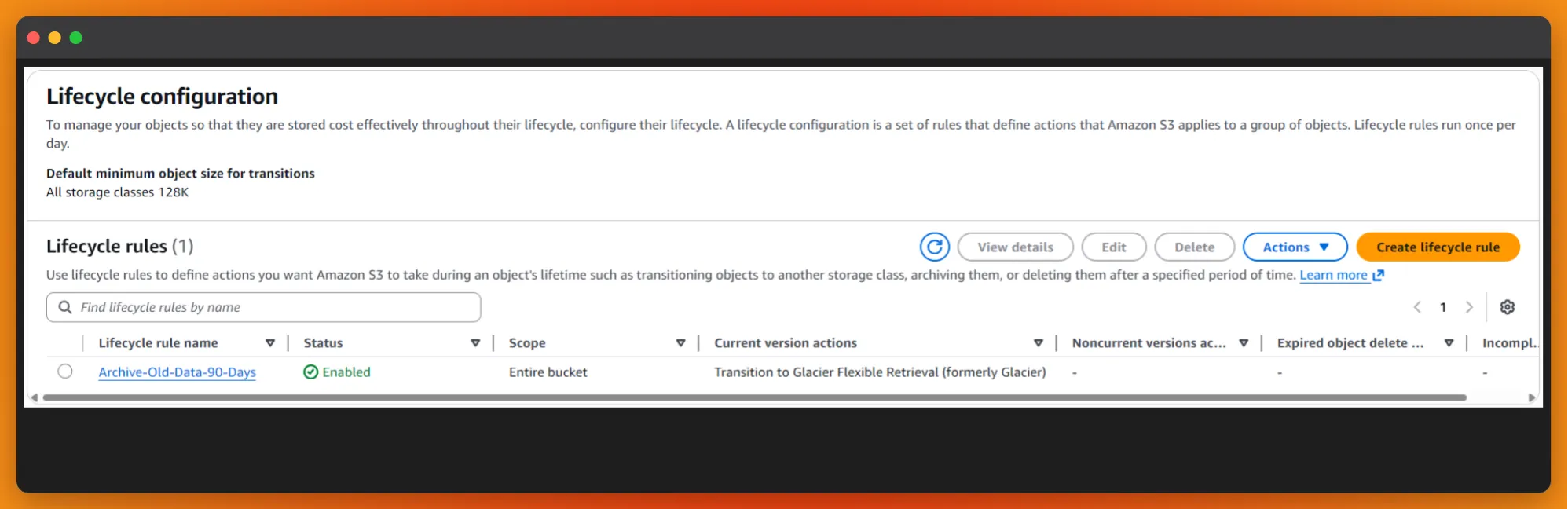

2. Information Lifecycle Management (ILM)

Not all data retains the same value over time. To optimize the storage footprint and follow industry-standard ILM principles, I’ve configured an S3 Lifecycle Policy:

- Active Phase: New drone imagery resides in S3 Standard for immediate processing and access.

- Archive Phase (90 days): Objects automatically transition to S3 Glacier Flexible Retrieval.

This tiering strategy allows for an 80% reduction in storage costs over time without compromising data integrity, ensuring that long-term archival doesn’t drain the project’s budget.

Figure 2: Automating data tiering: Moving from Standard to Glacier to optimize long-term storage costs.

Figure 2: Automating data tiering: Moving from Standard to Glacier to optimize long-term storage costs.

3. Latency Analysis in Serverless Compute

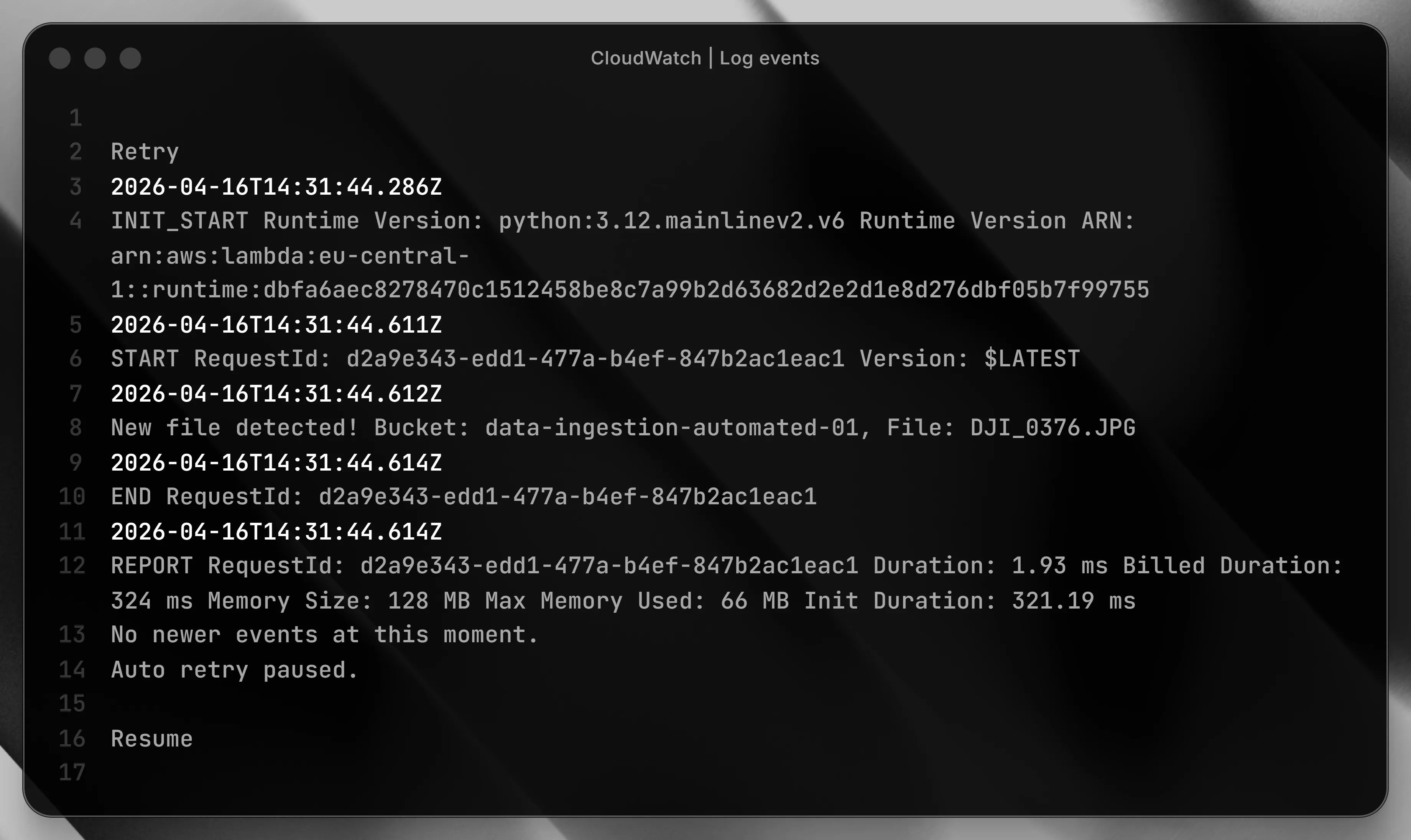

In event-driven architectures, code performance directly impacts the bottom line. Auditing the execution of my telemetry extraction via Amazon CloudWatch Logs yielded high-efficiency results:

- Execution Duration: 1.93 ms.

- Memory Usage: 66 MB of the allocated 128 MB.

Running processes in under 2ms allows for processing massive data volumes with negligible economic impact. This efficiency is the true power of the serverless model: you pay only for exactly what you use, down to the millisecond.

Figure 3: Performance Audit: CloudWatch logs showing ultra-low latency execution, critical for high-volume data processing.

Figure 3: Performance Audit: CloudWatch logs showing ultra-low latency execution, critical for high-volume data processing.

Conclusion

Architecture is not just about connecting services; it’s about finding the perfect balance between performance and cost. An infrastructure that manages and optimizes itself is the key to scaling any modern data engineering project.

By implementing strict governance and automated lifecycle management, we ensure that the system remains viable as the data volume grows.

Is your cloud infrastructure leaking budget? Let’s connect to optimize your AWS stack for performance and cost.